The Problem

Running a content operation across multiple brands means doing the same work over and over: find a trending topic, research it, write an article, make a featured image, format it for WordPress, then do it again for every platform. Each step involves a different tool, and most AI solutions are just wrappers around a single API call with no reliability guarantees.

What This Pipeline Does

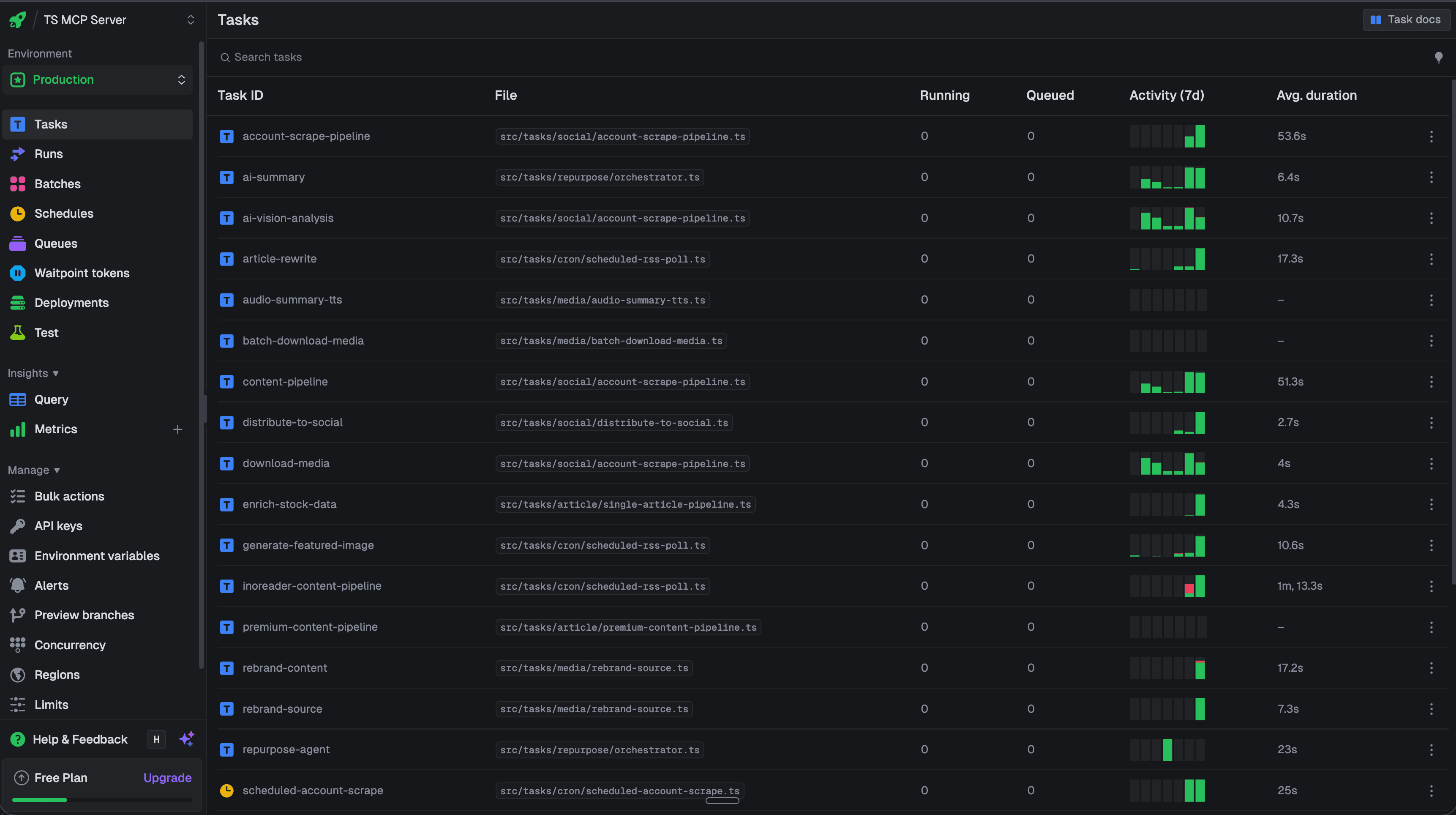

This is a production content automation system built on Trigger.dev v4 with 30+ durable background tasks. It handles the full lifecycle: ingest content from RSS feeds and social platforms, run deep web research, rewrite articles with AI, generate images, audit quality, and publish to WordPress. Every step runs as an independent, retryable task.

The pipeline processes hundreds of articles per week across multiple verticals (tech, sports, finance, entertainment, food). Each vertical gets its own AI persona with prompts managed and versioned through Langfuse. Every single LLM call is traced with full cost, latency, and quality scoring.

Why Trigger.dev

AI content generation is inherently unreliable. LLMs sometimes return malformed HTML, image generation APIs timeout, research queries fail. Trigger.dev gives us durable execution where each step survives crashes and retries automatically, concurrency controls to respect API rate limits, and observability into every run. It's the difference between a demo that works sometimes and a system you can trust to run while you sleep.

Pipeline Features

Built for reliability, observability, and scale. Not another LLM wrapper.

Durable Execution

Every task survives crashes, restarts, and timeouts. Long-running AI operations (Gemini rewrites, FLUX image generation) run reliably with automatic retry and exponential backoff.

Full Observability

Every LLM call traced through Langfuse with token counts, latency, cost breakdowns, and prompt version tracking. Quality scores auto-computed for each article rewrite.

Concurrency & Queuing

Tasks queue intelligently with configurable concurrency limits. Article rewrites process 3 at a time, social ingestion batches by platform, and image generation stays within API rate limits.

Modular Task Architecture

Each pipeline step is an independent task that can be triggered, tested, and debugged in isolation. Tasks compose into larger workflows through triggerAndWait chaining.

Quality Gates

HTML cleanup catches 7 common LLM output quirks (markdown-in-HTML, unclosed tags, etc.). Citation audit validates every URL against Tavily research sources. FAQ schema auto-extracted from content.

Multi-Persona Publishing

Articles rewritten with persona-specific prompts managed in Langfuse. Each vertical (tech, sports, finance, entertainment) gets its own voice, style, and WordPress author attribution.

Core Pipelines

Article Rewrite Pipeline (v13)

10-step durable workflow: ingest URL, deduplicate, scrape OG image, classify vertical, run Tavily research, rewrite with Langfuse prompts, generate FLUX featured image, audit citations, build FAQ schema, and publish to WordPress. Each step retries independently.

Social Ingestion Pipeline

Pulls content from Instagram, TikTok, YouTube, Twitter, Threads, and LinkedIn via Apify actors. Normalizes everything into a unified schema, stores in Supabase, and triggers downstream content generation automatically.

Content Repurposing

Takes any source (article, social post, video) and generates 6 output formats: social captions for 7 platforms, blog drafts, video/podcast scripts, FAQ schema, and SEO meta tags. All managed through Langfuse prompt versioning.

Scheduled RSS Polling

Cron-triggered task that checks RSS feeds from configured sources, deduplicates against existing articles, and automatically queues new content into the rewrite pipeline. Runs every 15 minutes with backpressure controls.

Tech Stack

By the Numbers

30+

Production Tasks

10

Pipeline Steps

6

Content Formats

100s

Articles / Week